Naveena Gorre, MS

MAI -CARE API

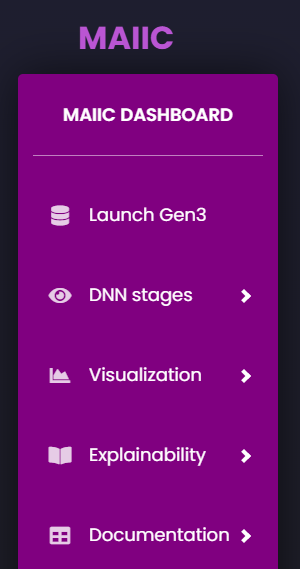

Developing Machine Learning models for clinical applications from scratch can be a cumbersome task with varying expertise as well as seasoned developers and researchers may often face incompatible frameworks and data preparation issues. This is further complicated in the context of diagnostic radiology and oncology applications, given the heterogenous nature of the input data and the specialized task requirements. Our goal is to provide clinicians, researchers, and early AI developers with a modular and user-friendly software tool that can effectively meet their needs to explore, train, and test AI algorithms by allowing users to interpret their results. This later step involves the incorporation of interpretability and explainability methods that would allow visualizing performance and interpreting predictions across the different neural network layers of a deep learning algorithm. To demonstrate this approach, we developed the Moffitt AI Interface for Cancer research (MAI-CARE). MAI-CARE is based on the web service Django framework in Python. MAI-CARE /Django/Python in combination with another data manager tool/platform, data commons such as Gen3. The tool allows to test, visualize, interpret how the model is performing. The major highlight of CRP10AII is its capability of visualization and interpretability of Blackbox AI algorithms. In addition, MAI-CARE provides many convenient features for model building and evaluation, including (1) Query and acquire data according to the specific application (classification, segmentation) from the data platform (GEN3 here) (2) train the AI models from scratch or use pre-trained models like VGGNet, AlexNet, BERT for transfer learning and test the model predictions, performance assessment, receiver operating characteristics curves (3) Interpret the AI models using SHAPLEY, LIME values (4) Visualize the heatmaps and activation maps of individual layers of neural network. Unexperienced users may have more easier times to swiftly pre-process, build/train their AI models on their own use cases, and further visualize, and explore these AI models as part of this pipeline all in an end-to-end manner. MAI-CARE will be provided as an open-source tool and we expect to continue developing it based on user feedback.

Publications

-

SPIE-defense and commercial sensing conference titled as “Identification of Bone Cancer In Canine Thermograms”. This paper discusses about the different combinations of laws and texture features analysis for pattern recognition, image classification. She is successful in improving the overall sensitivity % than earlier research with just texture features.

Please click the link below for article: https://doi.org/10.1117/12.2554490

-

SPIE-Medical Imaging conference titled as “User experience evaluation for MIDRC AI Interface”. This paper evaluates the capabilities of CRP10AII and its related human-API interaction factors. This evaluation aims at investigating various aspects of the API, including:(i) robustness and ease of use; (ii) visualization help in decision making tasks; and (iii) necessary further improvements for initial AI researchers with different medical imaging and AI expertise levels. Users initially experienced trouble testing the API; however, the problems have since been fixed as a result of additional explanations. The user evaluation's findings demonstrate that although different options on the API are generally easy to understand, use, and helpful in decision-making tasks for users with and without experience in medical imaging and AI, there are differences in how the various options are understood and used by users. We were also able to collect additional inputs, such as increasing information fields and including more interactive components to make the API more generalizable and customizable.

Please click the link below for article: http://dx.doi.org/10.1117/12.2651812

-

A research article titled: “MIDRC CRP10 AI interface—an integrated tool for exploring, testing and visualization of AI models”. This paper discusses about the AI Interface developed as part of MIDRC consortium.

Please click the link below for the article: https://doi.org/10.1088/1361-6560/acb754

Presentations

Naveena presented at SPIE medical imaging 2023 about her paper titled: “User experience evaluation of MIDRC AI Interface."

2-2-24 SPIE Medical Imaging 2024 Conference

On February 20, 2024, Naveena Gorre and Bradley Basik from Dr. El Naqa's lab attended and presented at the SPIE Medical Imaging 2024 conference live demo workshop session about the MIDRC AI Interface, which serves as a one-stop shop for Deep Learning pipelines.